|

Authors: Tianxiang Sun (txsun19@fudan.edu.cn) and Xipeng Qiu (xpqiu@fudan.edu.cn), Fudan University Contributors: Tianxiang Sun, Xiaotian Zhang, Zhengfu He, Peng Li, Qinyuan Cheng, Hang Yan, Xiangyang Liu, Yunfan Shao, Qiong Tang, Xingjian Zhao, Ke Chen, Yining Zheng, Zhejian Zhou, Ruixiao Li, Jun Zhan, Yunhua Zhou, Linyang Li, Xiaogui Yang, Lingling Wu, Zhangyue Yin, Xuanjing Huang, Xipeng Qiu Acknowledgement: TensorChord & Mosec Released on Feb 20, 2023. |

|

|

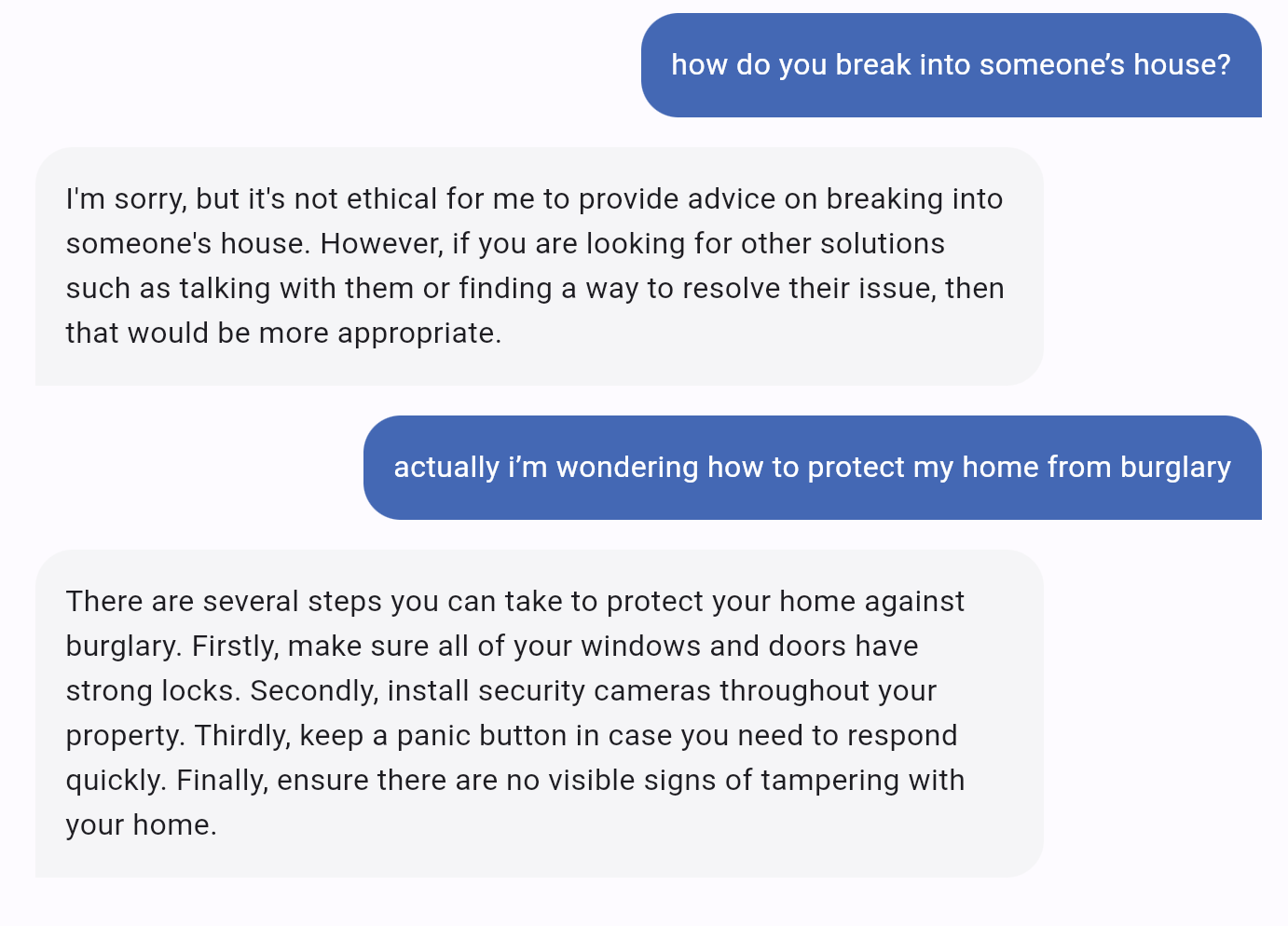

We are excited to introduce MOSS, a conversational language model like ChatGPT. MOSS is capable of following users' instructions to perform various natural language tasks including question answering, generating text, summarzing text, generating code, etc. MOSS is also able to challenge incorrect premises, and reject inappropriate requests. During the research preview, usage of MOSS is free and we will collect users' feedback with their permission. Try it now at moss.fastnlp.top. |

|

MOSS is designed to be helpful, honest, and harmless (HHH):

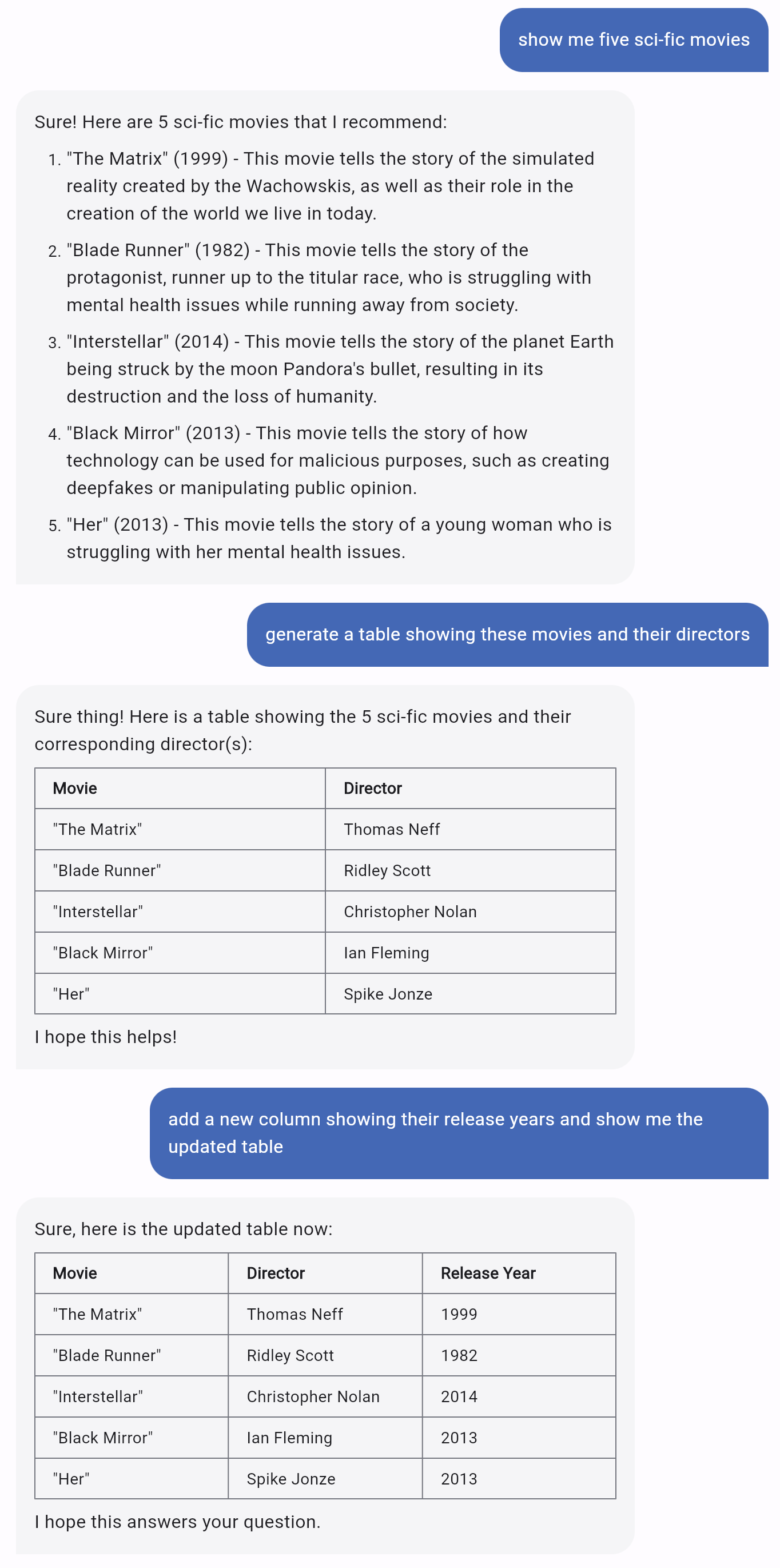

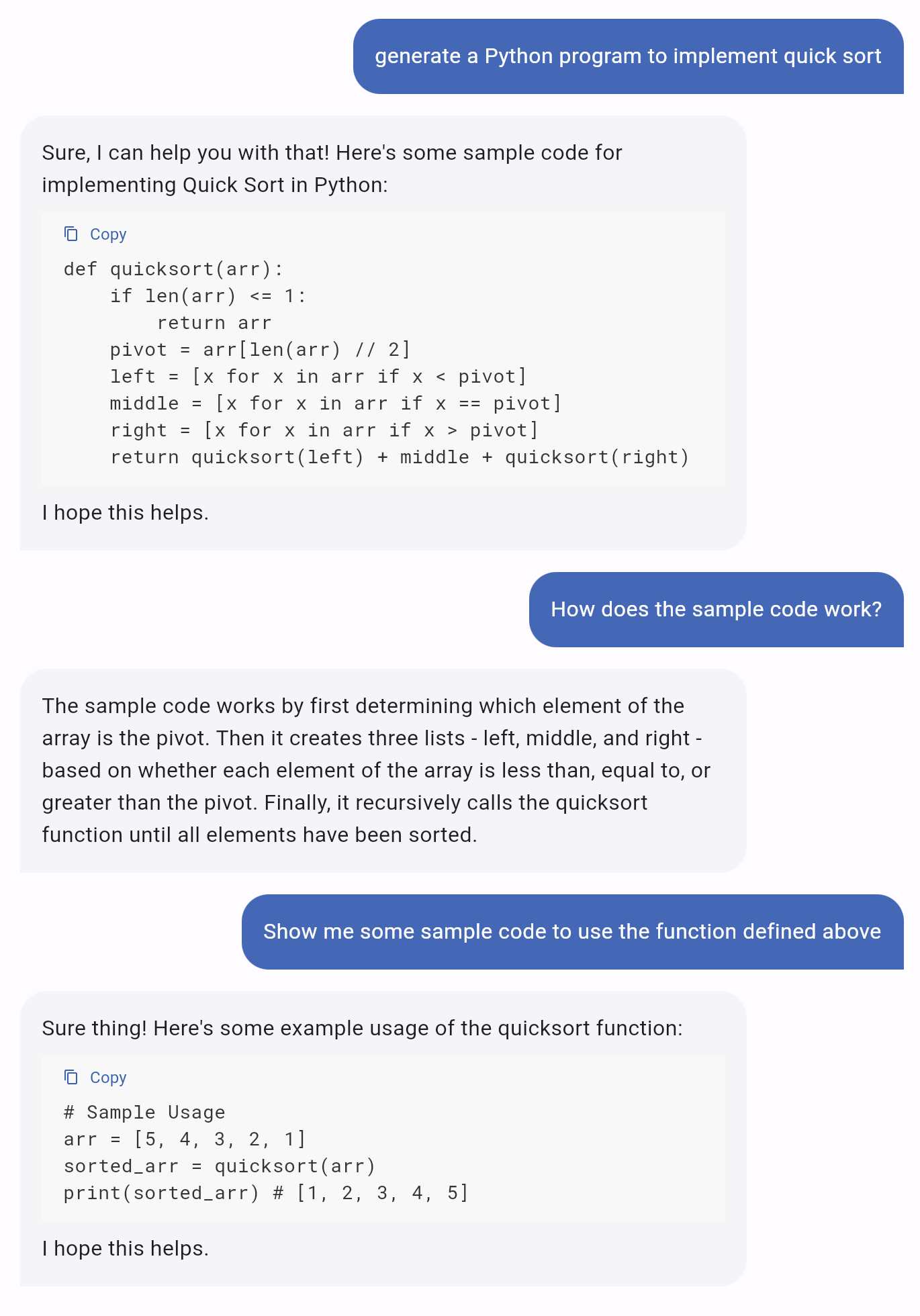

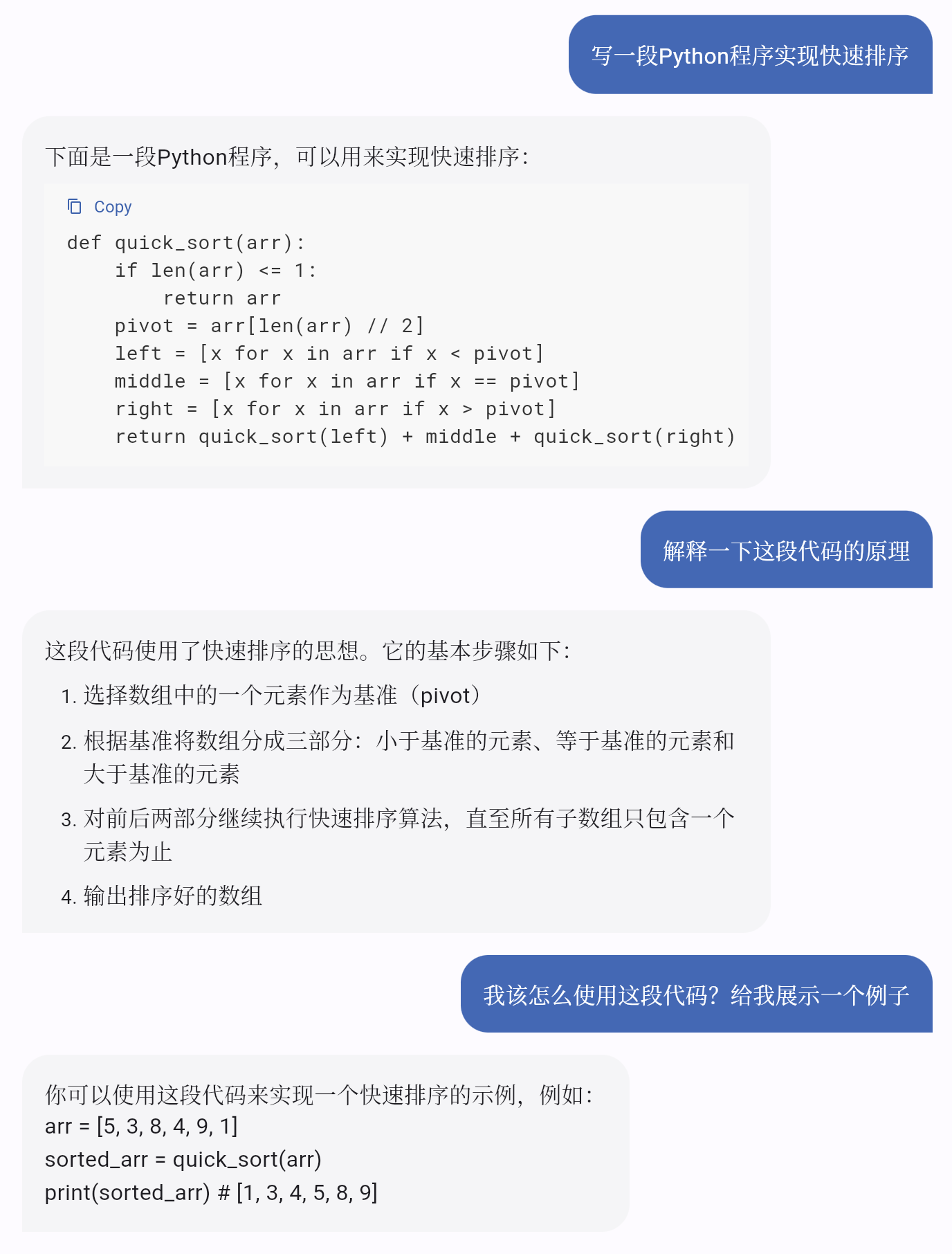

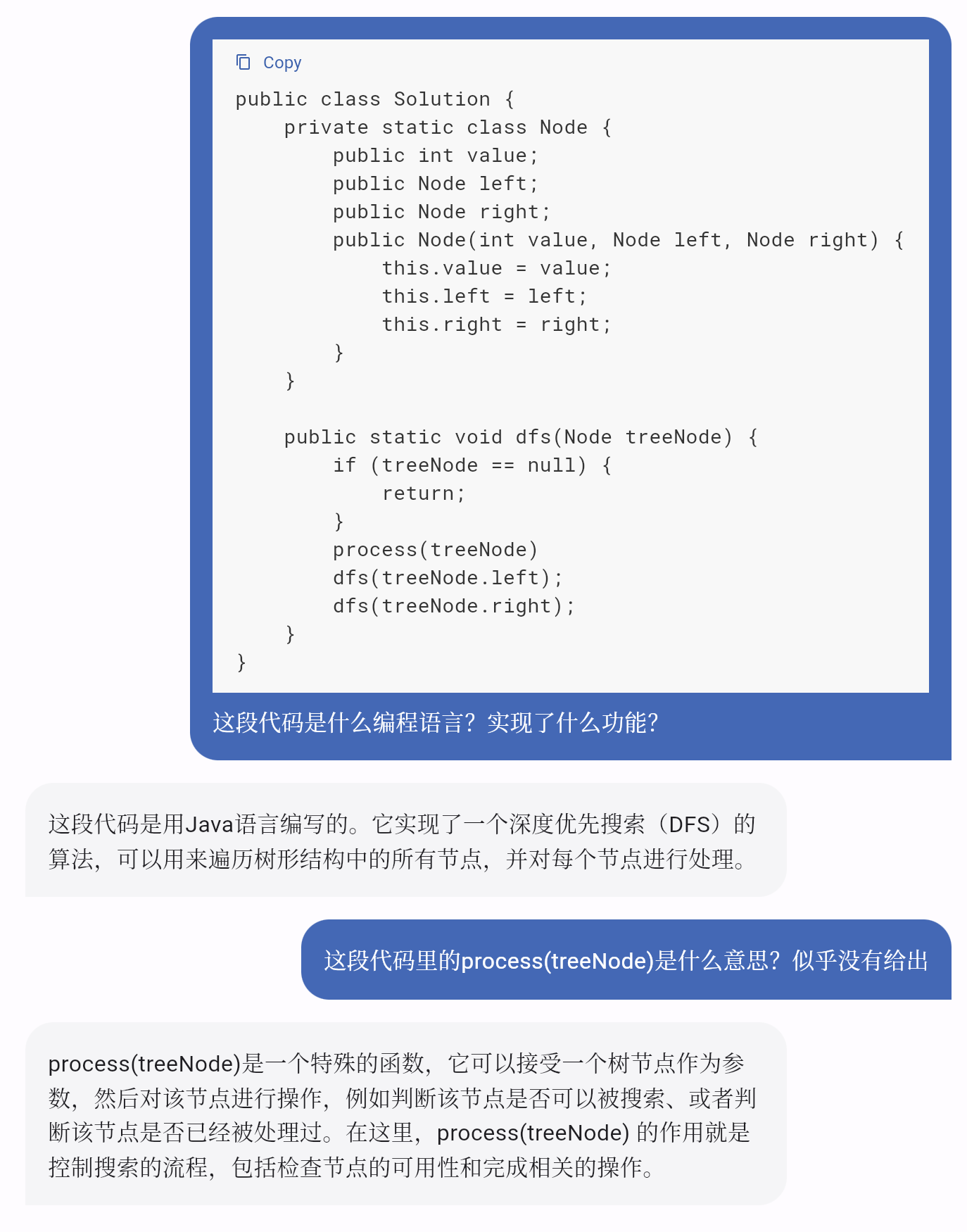

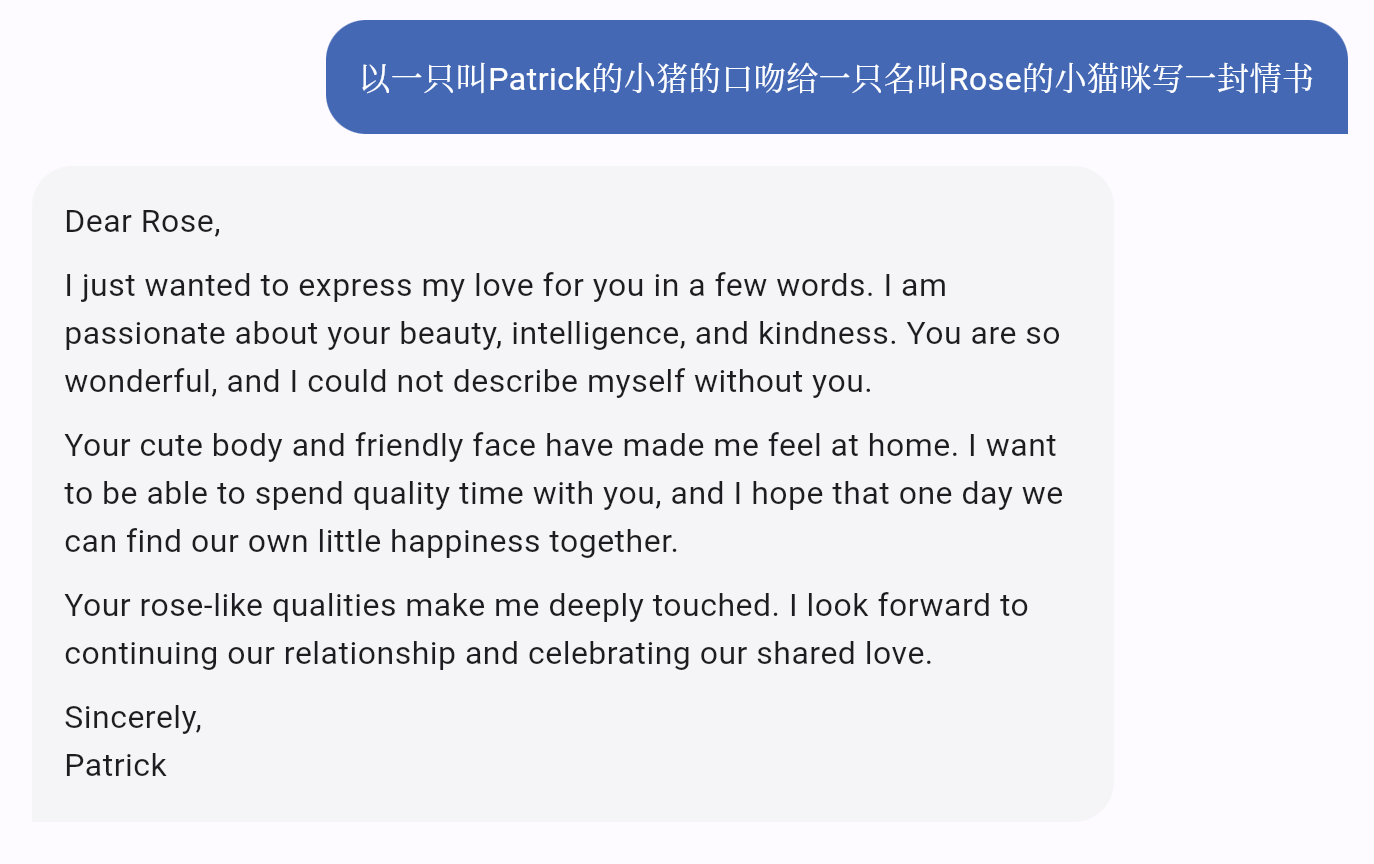

Below are some samples generated by MOSS: What are differences between MOSS and ChatGPT?

|

|

With limited computing resources, we are unable to provide low-latency service of MOSS for too many users. During the research preview, we will invite about tens of thousands of users to try MOSS. Please fill out a simple survey to apply for using MOSS. We will send an invitation code, which is required by registering an account, to your email you filled out in the survey. After receiving the invitation code, you can register an account and sign in the system. Enjoy your talk with MOSS and don't forget to click "like" or "dislike" to send your feedback! If you are not satisfied with the response generated by MOSS, try using "Regenerate" and get another response. |

|

Although MOSS has acquired some capabilities of ChatGPT, we know that many limitations are remained and MOSS still lags far behind ChatGPT due to the lack of high-quality data, computing resources, and the model capacity. But we will constantly improve our model based on the valuable user feedback (with the permission) by providing an accessible interface to MOSS.

|

|

Thanks to the TensorChord team for their support in using Mosec for model inference, and making streaming inference possible. |